Artificial intelligence is reshaping how companies create, protect, and profit from their intellectual property. The technology that powers everything from design tools to drug discovery is also creating legal gray areas that didn’t exist five years ago.

At Pierview Law, we’re seeing businesses struggle with questions that have no clear answers yet: Who owns content an AI generates? Can you legally train AI on copyrighted material? These aren’t theoretical problems anymore-they’re affecting real companies right now.

The Three IP Ownership Problems AI Creates Right Now

Who Actually Owns AI-Generated Content

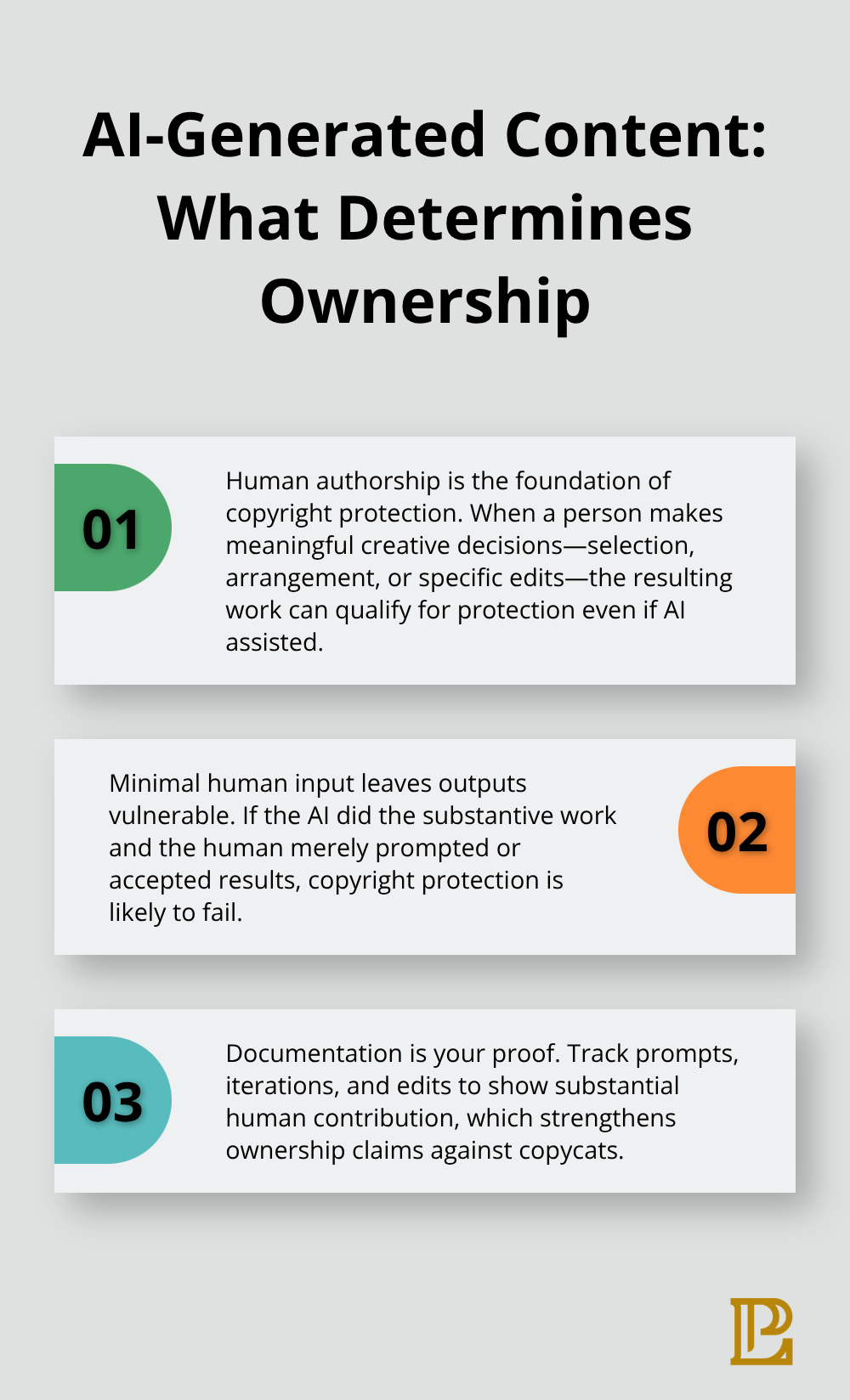

The U.S. Copyright Office registered over 100 AI-assisted works as of early 2024, but here’s what matters: almost none of them were purely AI-generated. The distinction determines who owns what your AI creates. If a human made meaningful creative decisions during the process, copyright protection applies. If the AI did the work with minimal human input, protection disappears.

This isn’t a minor technicality. Companies discover that outputs from tools like DALL-E or ChatGPT may have zero legal protection unless they document substantial human authorship. The Copyright Office examined cases like Théâtre D’opéra Spatial and Zarya of the Dawn and rejected protection because human contribution was too thin. Your AI-generated marketing copy, design asset, or code might be legally defenseless against competitors who copy it.

Ownership questions don’t get resolved in your favor by default-you must prove human creative input was substantial enough to matter legally.

Training Data Creates Immediate Legal Exposure

Training data presents a separate problem. Getty Images sued Stability AI for using millions of copyrighted images without permission, and similar litigation expands across the industry. The question isn’t theoretical anymore: can you legally feed copyrighted material into your AI system to improve its performance?

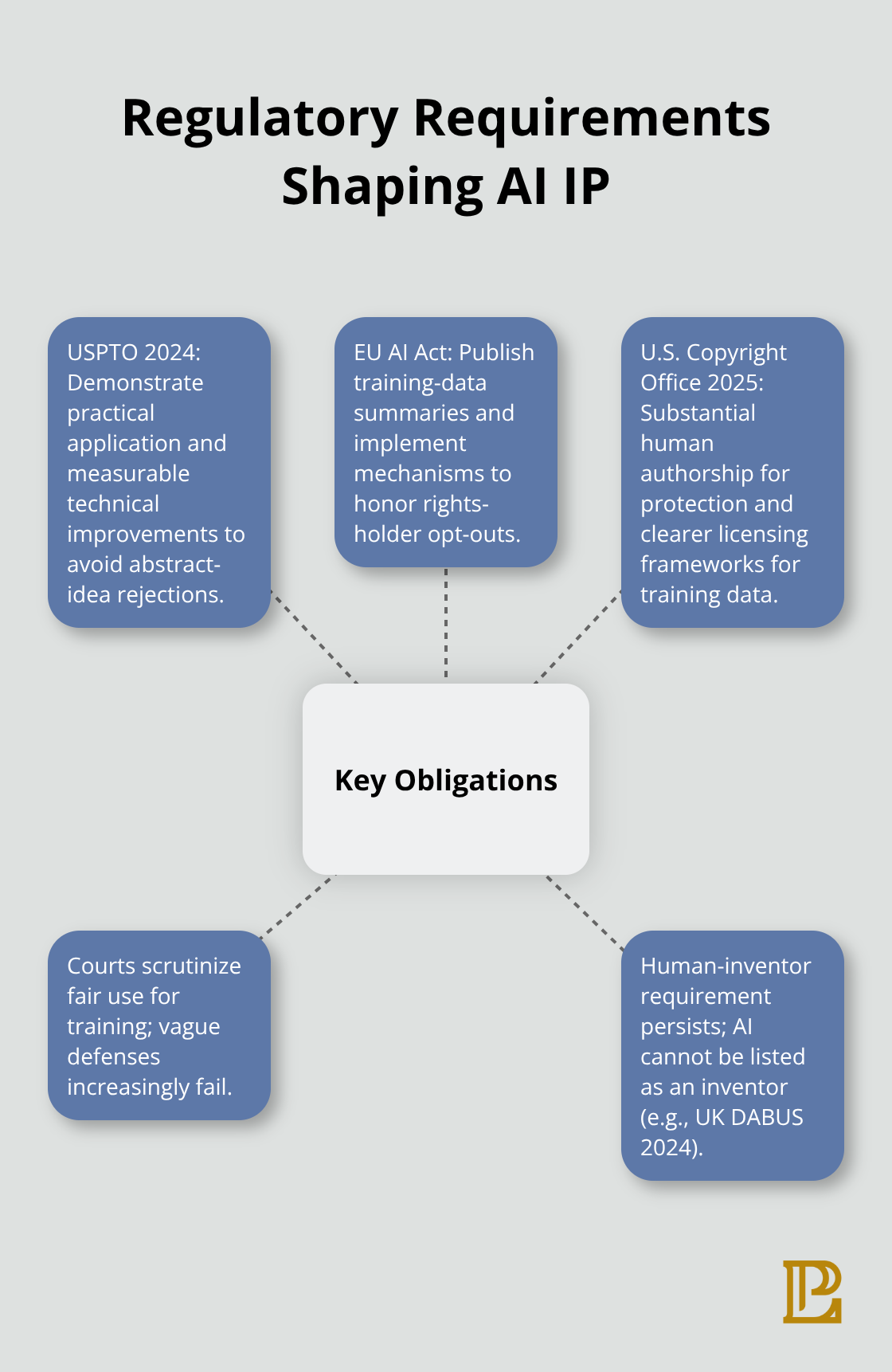

U.S. fair use doctrine might protect some training scenarios, but courts haven’t settled this definitively yet. The European Union took a different approach through its AI Act, which requires companies to publish training-data summaries and honor opt-out requests from rights holders. Sony Music and other publishers already use opt-out mechanisms to prevent their content from being used for AI training. This means your training pipeline faces real legal risk if you don’t verify data sources and secure proper licenses.

Patent Protection Faces a Human Inventor Requirement

Patent protection for AI inventions faces its own obstacle course. The UK Supreme Court ruled in 2024 that AI cannot be listed as an inventor on patents, which means your AI system’s breakthrough discovery has zero patent protection unless a human takes credit for it. The USPTO’s July 2024 guidelines clarified that AI inventions need to show practical application beyond mental processes, but many companies file claims that get rejected.

Your AI might solve a real problem faster than humans ever could, yet that solution may not be patentable if you frame it incorrectly. The solution requires careful claim drafting that emphasizes measurable technical improvements-faster computations, increased efficiency, or improved accuracy. Companies that document their AI development processes in detail (including data sources, methodology, and version updates) strengthen their patent position considerably. These three ownership gaps force businesses to rethink how they develop, deploy, and protect AI systems. The legal landscape won’t stabilize on its own, which is why companies need concrete strategies now rather than waiting for courts to decide.

How Businesses Actually Protect AI IP Right Now

Establishing Ownership Before Projects Launch

Companies that wait for perfect legal clarity will lose competitive advantage. The businesses protecting themselves now establish ownership frameworks before AI projects launch. This means defining in writing who owns outputs when the AI generates content, whether that’s the company, the contractor, or the tool provider. OpenAI’s terms assign output rights to users, but other platforms differ significantly. Your contracts with AI tool vendors must specify ownership explicitly because silence creates disputes later. Without clear written agreements, you risk losing control of work your company invested resources to create.

Treating Training Data as a Licensing Problem

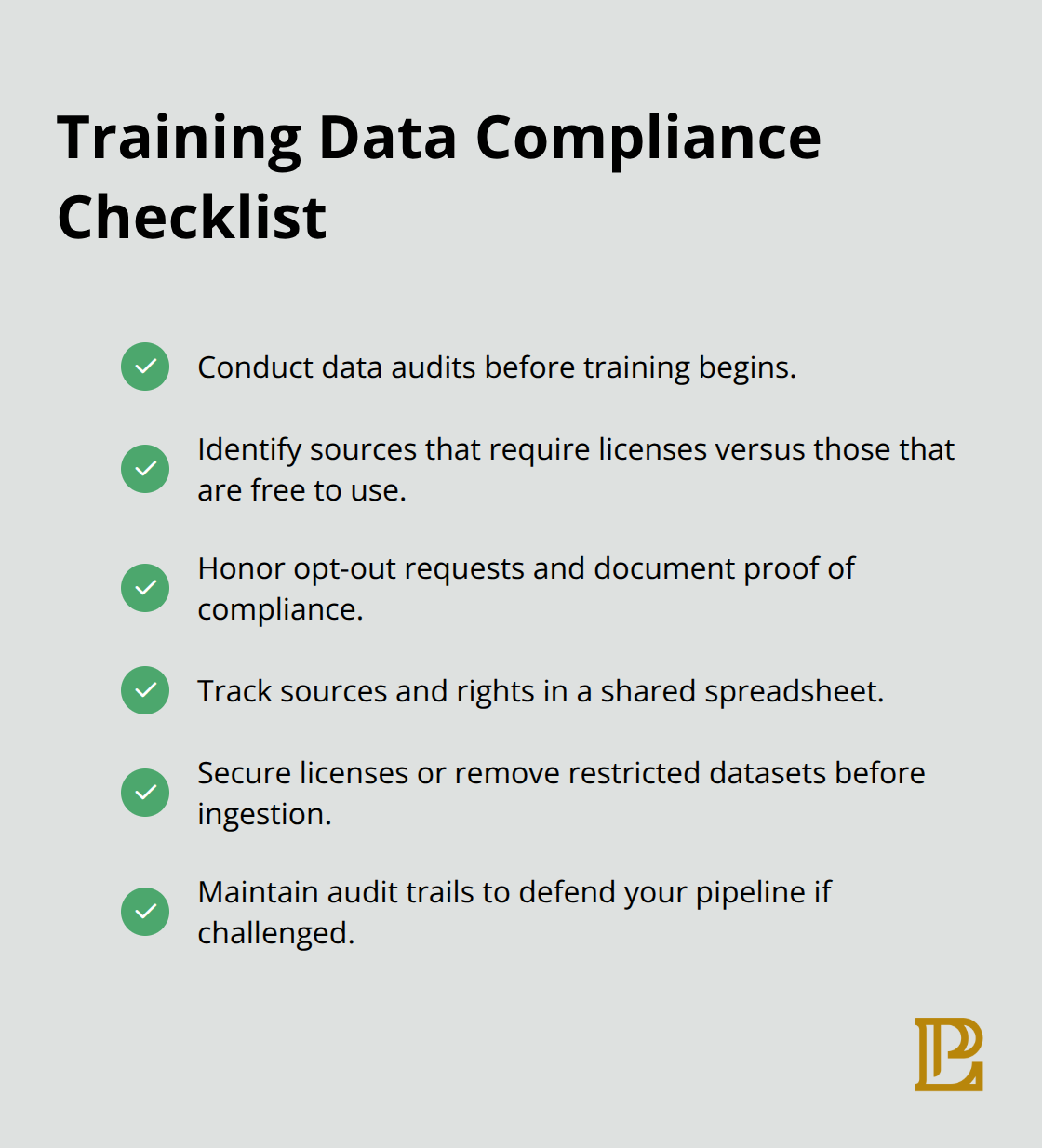

Companies treat training data as a licensing problem, not a fair use gamble. Getty Images v Stability AI showed that assuming fair use protection is dangerous. Organizations now conduct data audits before training, identifying which sources require licenses and which can be used freely. The EU AI Act’s opt-out mechanism for publishers means your training pipeline needs documented proof that you honored rights holders’ preferences. Teams implementing this practice maintain spreadsheets tracking data sources, usage rights, and licensing agreements. This documentation becomes your defense if someone challenges your training practices later.

Documenting Development to Strengthen Patent Claims

Patent examiners and courts need to see human decision-making at every stage. The USPTO’s July 2024 AI patent guidelines emphasize that measurable technical improvements matter more than raw innovation. Companies filing AI patents now include detailed records of algorithm selection, training methodology, version iterations, and performance benchmarks. This documentation proves the invention delivers practical results beyond mental processes, which separates patentable from unpatentable AI work. Organizations that track these details from day one file stronger applications and face fewer rejections.

Separating AI Governance From General IP Policy

One critical mistake: companies assume their existing IP policies cover AI. They don’t. Traditional policies address employee-created code or designs, not AI-generated outputs with ownership ambiguity. Updating policies requires defining what counts as human creative input for copyright purposes, establishing data source verification requirements, and creating templates for licensing agreements. Companies that separate AI governance from general IP policy move faster and catch problems earlier. This distinction forces teams to think specifically about AI risks rather than treating them as variations of familiar challenges.

Adapting to Regulatory Changes

The regulatory environment keeps shifting, so these practices aren’t permanent solutions. The U.S. Copyright Office released Part 2 of its report on generative AI in January 2025, examining whether AI outputs qualify for copyright protection. Part 3, addressing training data policy, arrived in May 2025 with recommendations for how training rights should be governed. These developments will reshape what’s permissible, making regular policy reviews essential rather than optional. As courts issue new rulings and governments introduce stricter requirements, the strategies that protect your company today may need adjustment tomorrow-which is why staying informed about legal developments matters as much as implementing current safeguards.

What Courts and Regulators Are Actually Deciding About AI IP

Court Decisions Set Real Consequences for Training Data

Court decisions move faster than legislation, and that creates immediate consequences for how you handle AI. The Getty Images case against Stability AI revealed that courts won’t accept vague fair use arguments anymore. Getty’s legal team demonstrated that Stability AI scraped millions of copyrighted images without permission, and the court refused to dismiss the case based on fair use protections. This signals that training data sources will face real scrutiny. Companies cannot assume fair use covers their entire training pipeline just because the doctrine exists.

The U.S. Copyright Office rejected the Théâtre D’opéra Spatial registration in September 2023, establishing that minimal human involvement fails to qualify for copyright protection, regardless of how complex the AI system is. More recently, the Copyright Office released Part 2 of its report on generative AI in January 2025, concluding that outputs created with generative AI require substantial human authorship to be copyrightable. Part 3, released in May 2025, addressed the training data question directly by recommending clearer licensing frameworks for how AI developers should source their training material. These aren’t abstract recommendations-they shape how courts will evaluate infringement claims against your company.

Government Agencies Issue Specific Patent and Transparency Requirements

Government agencies moved from studying AI to issuing specific guidance. The USPTO’s July 2024 AI patent guidelines created concrete criteria: your AI invention must show practical application beyond what a human could do mentally, and it must produce measurable technical improvements. Examiners now reject claims that describe AI performing abstract tasks without tangible results.

Internationally, the European Union’s AI Act established mandatory transparency requirements that apply even if your company operates outside Europe. If you train AI on copyrighted works, you must publish summaries of your training data and implement mechanisms to honor opt-out requests from rights holders. Sony Music already uses these opt-out provisions, meaning your training pipeline faces documented legal obligations if you want access to EU markets.

The Human Inventor Requirement Eliminates Patent Protection for AI-Only Work

The UK Supreme Court’s 2024 DABUS ruling eliminated any possibility of listing AI as an inventor on patents, forcing companies to identify human inventors explicitly or lose patent protection entirely. This convergence across courts and regulators signals that the gray areas are shrinking. What worked two years ago won’t work now, and what’s permissible today will likely face additional restrictions within eighteen months.

Final Thoughts

The intersection of AI and intellectual property creates immediate, concrete risks that affect your business today. Ownership disputes over AI-generated content, training data liability, and patent eligibility gaps reshape how companies operate right now. The U.S. Copyright Office’s January 2025 report confirmed that AI outputs require substantial human authorship to qualify for protection, while the UK Supreme Court’s 2024 ruling eliminated AI as a patent inventor.

Companies that protect themselves now establish ownership frameworks before projects launch, treat training data as a licensing problem rather than a fair use assumption, and document development processes to strengthen patent claims. They separate AI governance from general IP policy because traditional approaches fail to address AI-specific risks. They monitor regulatory changes because what regulators permit today will face additional restrictions within months.

Your business needs immediate action on three fronts: audit your current AI projects and define ownership in writing before disputes arise, verify your training data sources and secure proper licenses rather than assuming fair use covers your pipeline, and document your AI development process in detail to support patent applications. Pierview Law provides the business law guidance needed to navigate these challenges and protect your competitive position.